Refined Plane Segmentation for Cuboid-Shaped Objects by Leveraging Edge Detection

by Alexander Naumann, Laura Dörr, Niels Ole Salscheider, Kai Furmans.Paper Code arXiv Venue Notes

© 2020, IEEE.

© 2020, IEEE.Abstract

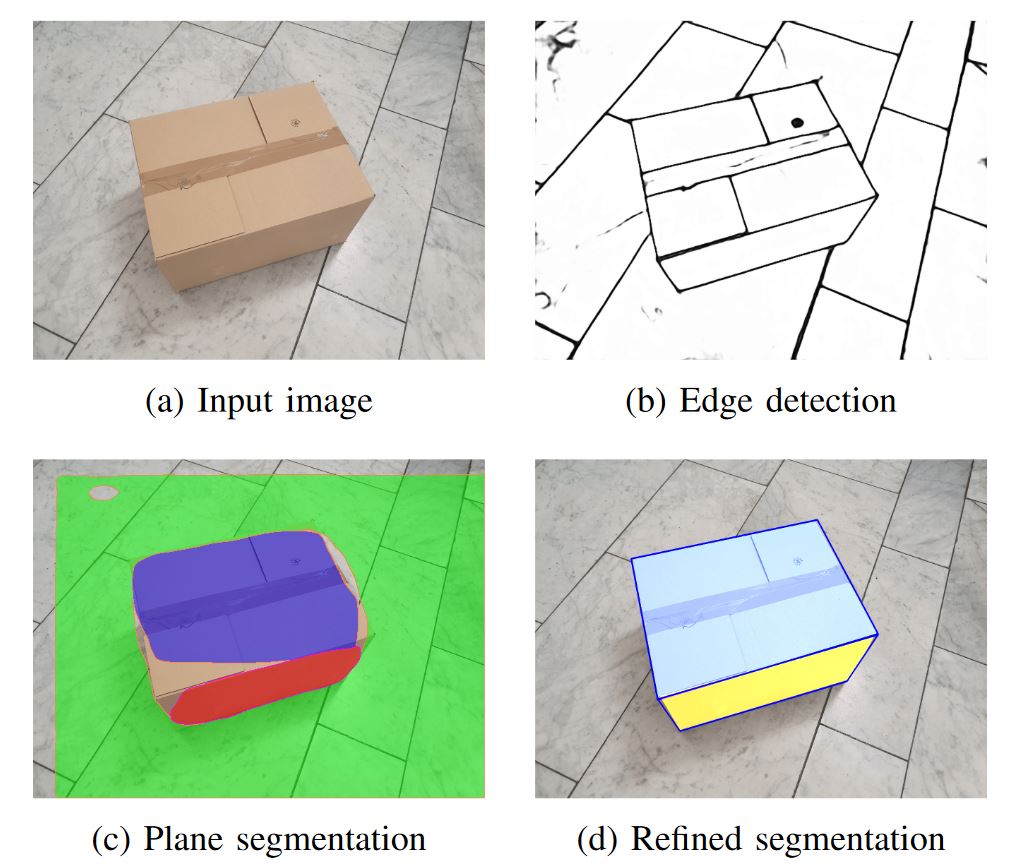

Recent advances in the area of plane segmentation from single RGB images show strong accuracy improvements and now allow a reliable segmentation of many indoor scenes into planes. Nonetheless, fine-grained details of these segmentation masks are still lacking accuracy, thus restricting the usability of such techniques on a larger scale in numerous applications, such as inpainting for Augmented Reality use cases. We propose to enhance deep learning based methods with classical model-based approaches by aligning the segmented plane masks with edges detected in the image. This allows us to increase the accuracy of state-of-the-art approaches, while limiting ourselves to cuboid-shaped objects. Our approach is motivated by logistics, where this assumption is valid and refined planes can be used to perform robust object detection without the need for supervised learning.Results for two baselines and our approach are reported on our own dataset, which we made publicly available. The results show a consistent improvement over the state-of-the-art. The influence of the prior segmentation and the edge detection is investigated and finally, areas for future research are proposed.